Introducing Humanoid Vision Agent: The First AI Agent With Eyes

March 19, 2026

5 Minutes

Contents

An AI agent should never ask a question it could have answered on its own.

That sounds simple but has deep implications for how you build a Voice AI agent.

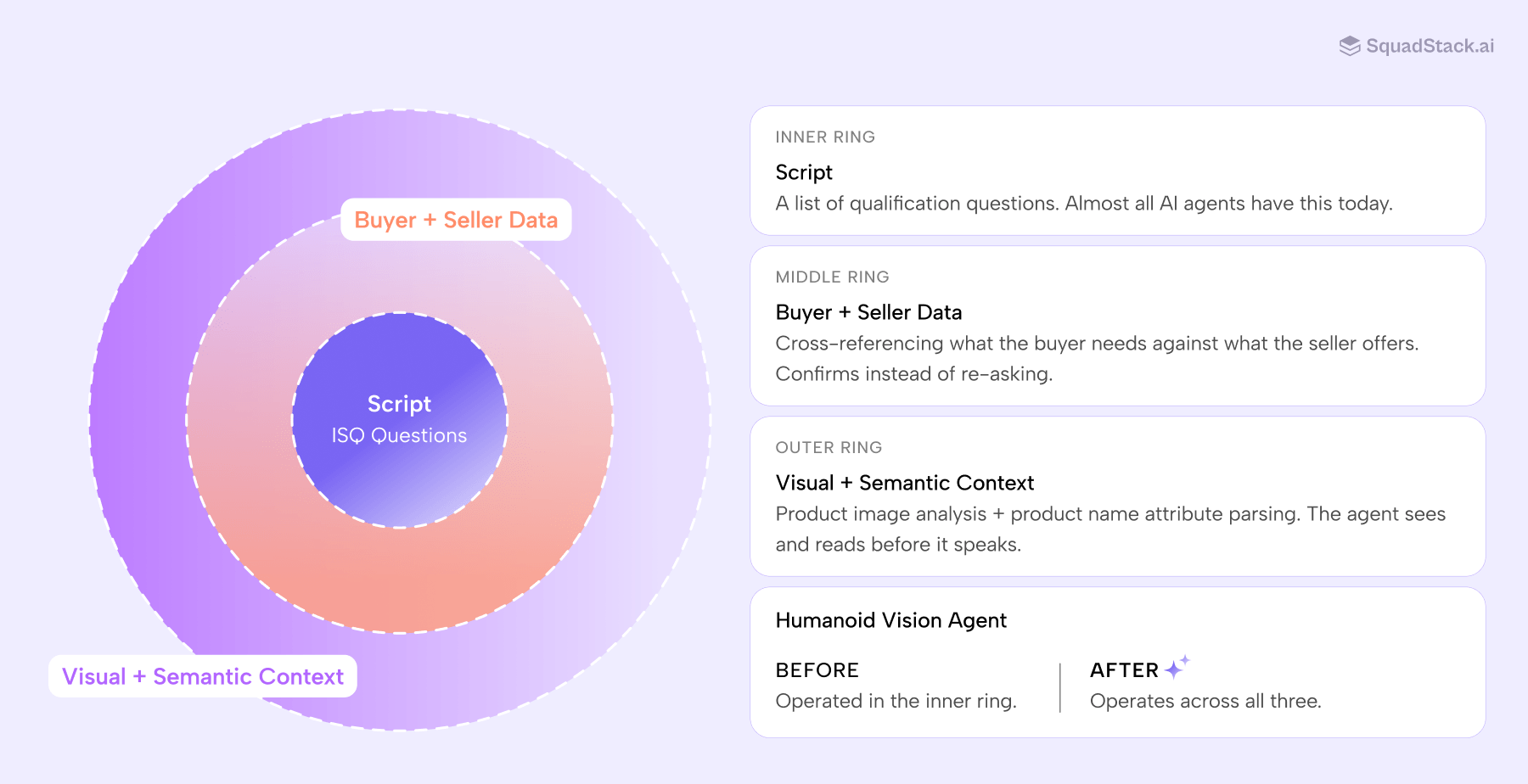

It means the agent can't just have a script. It needs context. It needs to know what the buyer was looking at: the specific product, its color, its material, its form factor. What the seller actually stocks. What the product name already tells you. It needs to show up to the call prepared, the way a great sales rep would.

Most AI agents in production today don't do this. Every call starts from zero. No visual awareness. No data cross-referencing. No reading of what's already in the listing. Just a list of questions and a voice that's good at reading them.

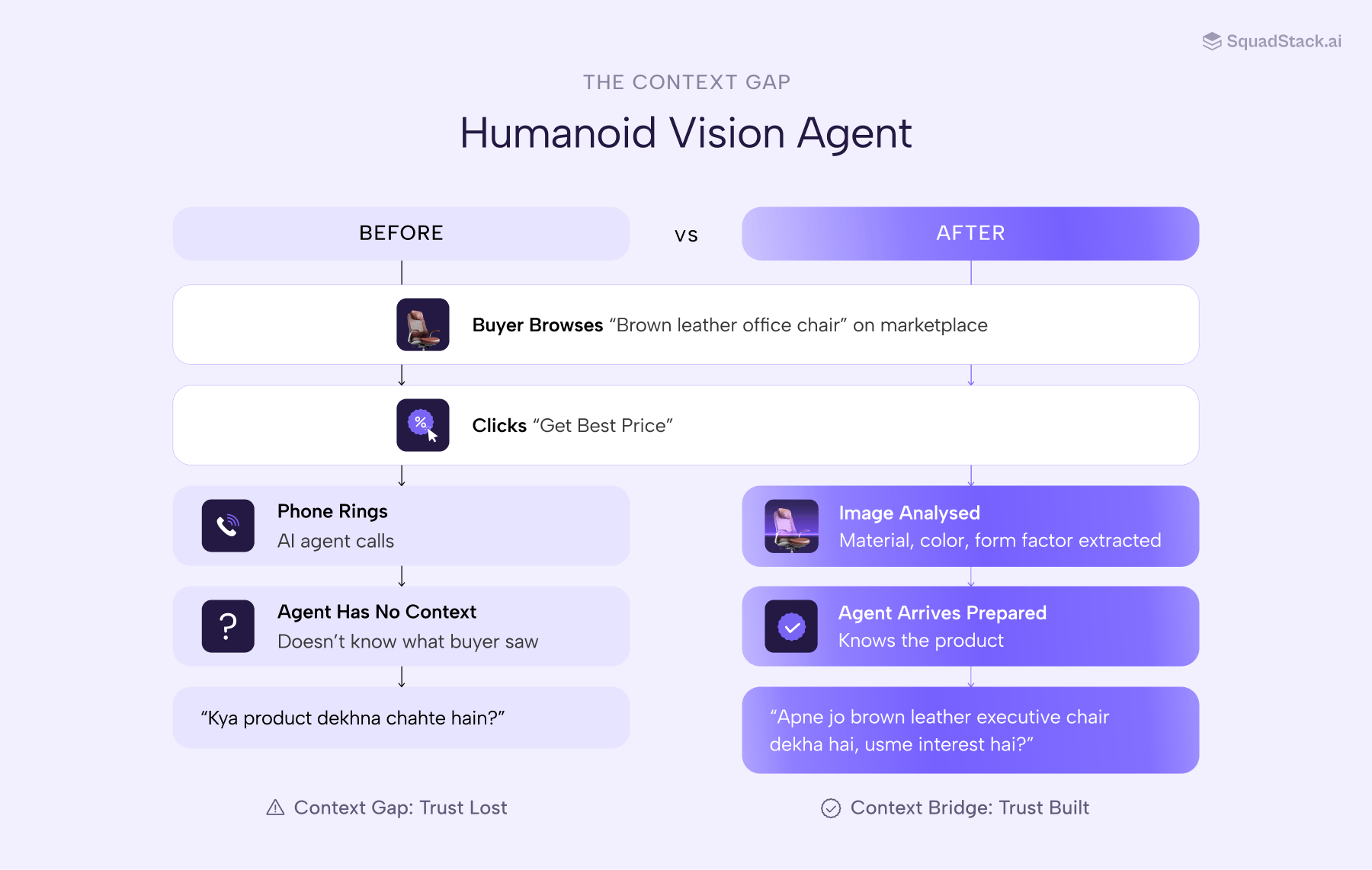

A buyer spends five minutes studying a product listing. They notice the finish, the size, the color. They click "Get Best Price." The call connects in seconds. First thing the agent says: "Aap kaunsa product dhundh rahe hain?"

Everything the buyer just looked at, gone. That's the context gap. And it's the default state of every Voice AI agent in production today.

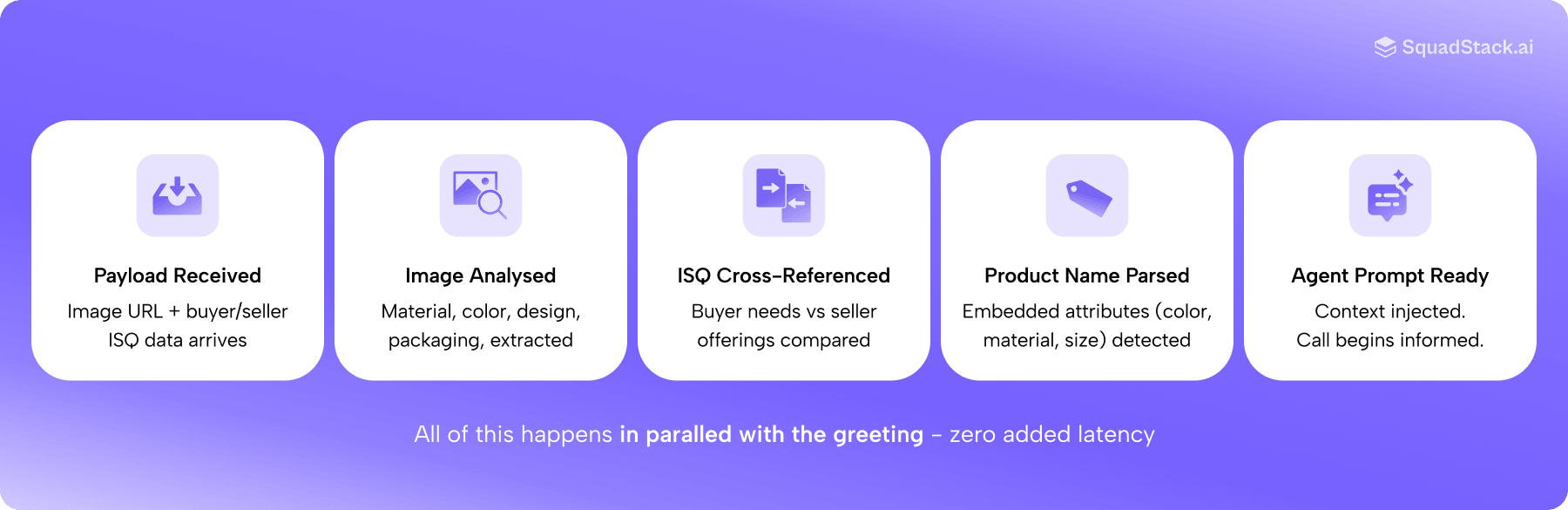

With Humanoid Vision Agent, we've rebuilt our agent around a different idea: context before conversation.

The agent gathers understanding before the call starts and uses it throughout. The result is calls that are shorter, sharper, and feel fundamentally different to the buyer on the other end.

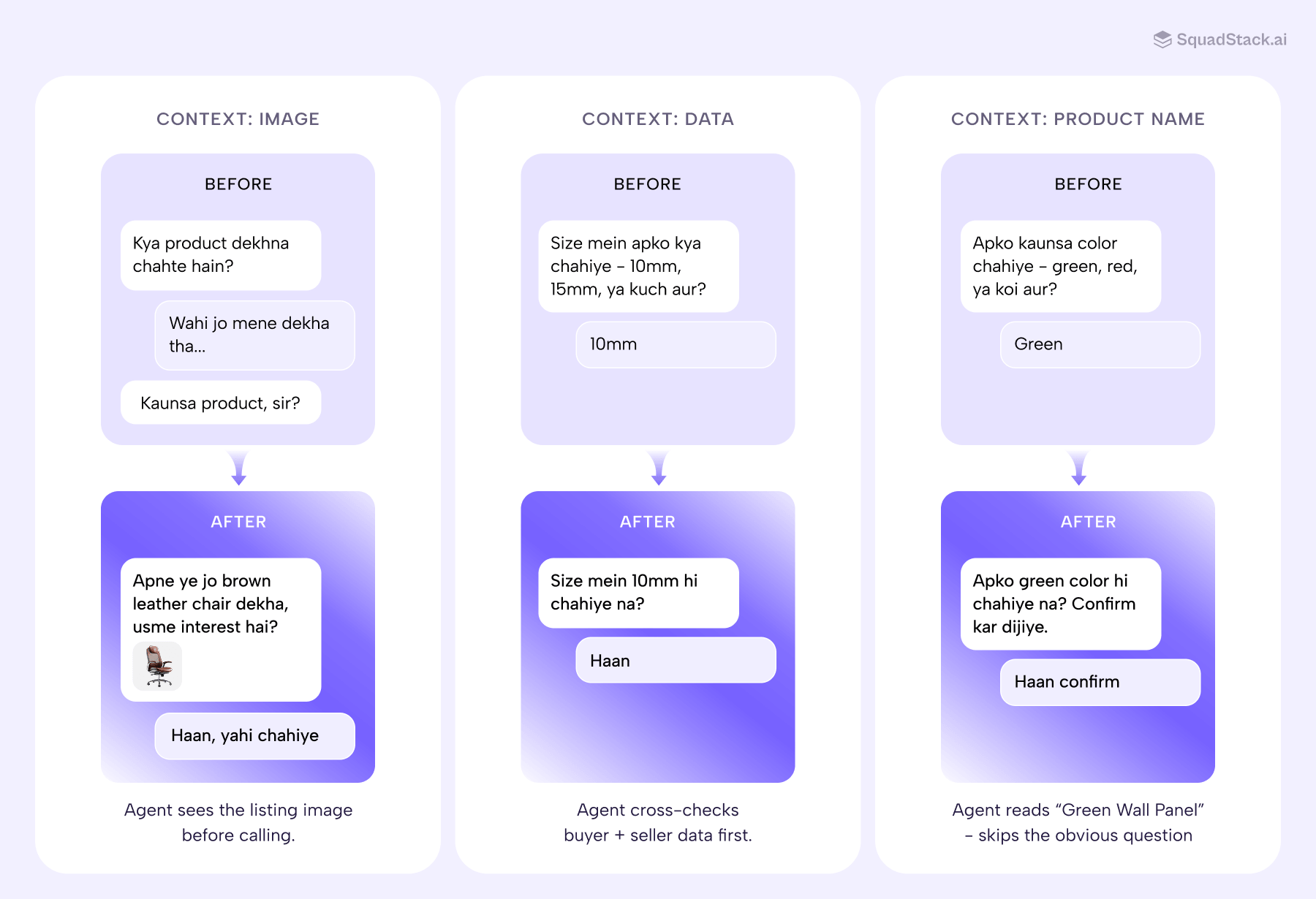

What Context-First Looks Like in Practice

Context-first isn't a single feature. It's an architectural decision that changes what the agent does before it speaks. In Humanoid Vision Agent, it shows up in three ways.

1. Visual Awareness: The Agent Sees the Product

When a call payload includes a product image, Humanoid Vision Agent analyses it before the buyer picks up. Material, color, finish, structural details, packaging, all extracted and injected into the agent's context before the first word is spoken.

So when a buyer is calling about a brown leather executive chair with chrome accents and five caster wheels, the agent already knows that. It can reference the upholstery. It can confirm the finish. It understands what the buyer is picturing, in the same specific, visual way the buyer does.

The conversation feels informed from the first exchange, not because the agent asked better questions, but because it didn't need to ask them at all.

2. Data Cross-Referencing: Confirm, Don't Interrogate

Before asking any qualification question, Humanoid Vision Agent checks what the buyer needs against what the seller actually offers. If there's only one matching option, it confirms instead of reading the full list.

Without Humanoid Vision Agent: "Size mein aapko kya chahiye, 10mm, 15mm ya kuch aur?"

With Humanoid Vision Agent: "Size mein aapko 10mm hi chahiye na?"

Three seconds saved per question. But more importantly, the buyer stops feeling interrogated and starts feeling understood.

3. Product Name Parsing: Read the Listing, Skip the Obvious

If a product is called "Green Wall Panel" and the qualification flow has a color field, asking "what color do you want?" isn't qualification. It's redundancy.

Humanoid Vision Agent reads the product name for embedded attributes and confirms instead of re-asking.

Without Humanoid Vision Agent: "Aapko konsa color chahiye, green, red ya kuch aur?"

With Humanoid Vision Agent: "Aapko green color hi chahiye na? Confirm kar dijiye."

None of these are technically complex. That's the point. The hard part wasn't building them. It was recognising that the problem wasn't speech quality. It was context quality.

Why This Matters Beyond the Feature Set

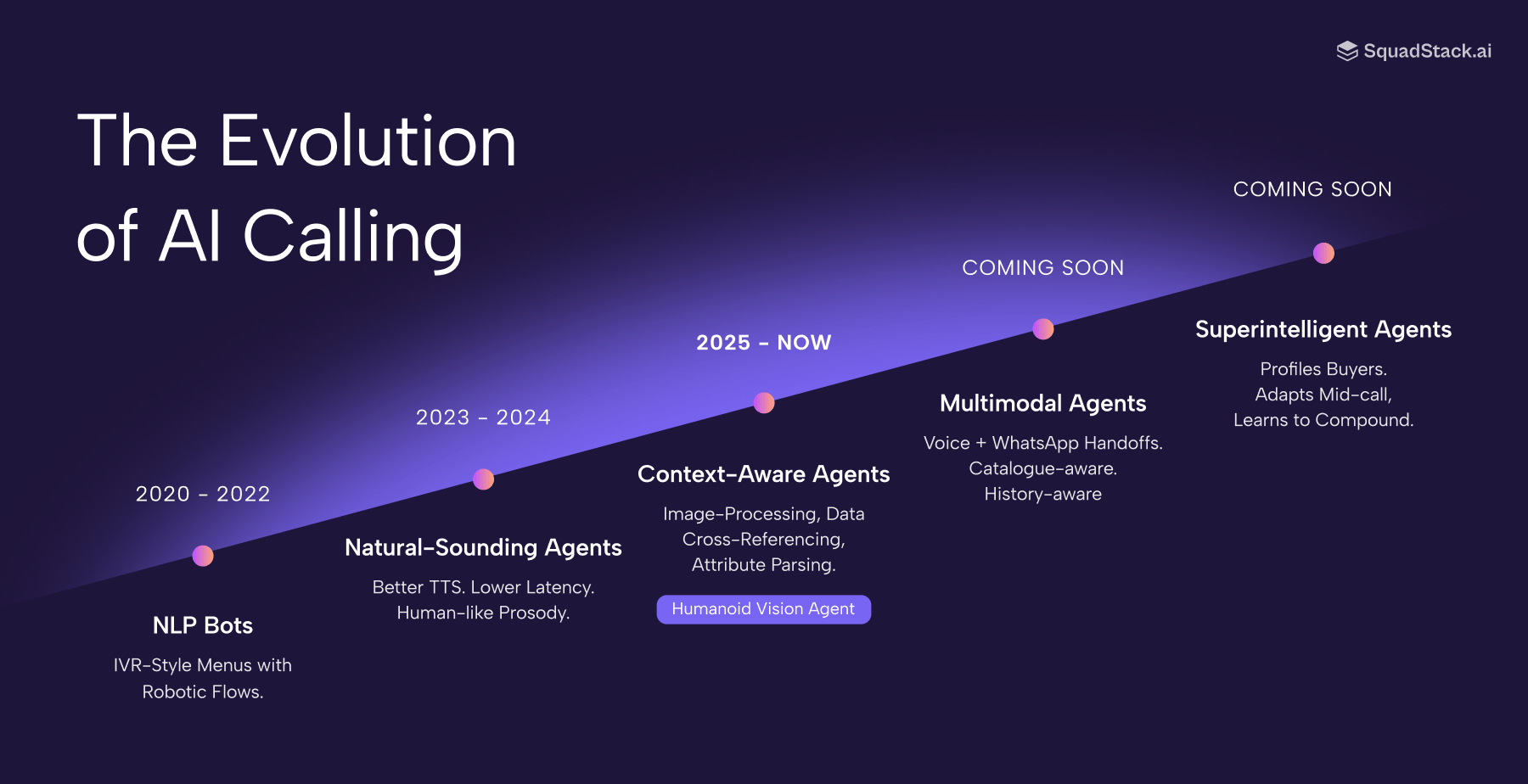

AI calling has spent the last few years in a naturalness arms race. Better TTS. Lower latency. More human-like prosody. That work matters, and we've invested heavily in it. But naturalness is converging. Within 18 months, every serious platform will sound indistinguishable from a human.

When that happens, the differentiator won't be how the agent sounds. It'll be what the agent understands before it opens its mouth.

Every redundant question is a signal to the buyer that the system hasn't done its homework. Every confirmation that lands right signals that it has. At millions of calls a day, these signals compound into something measurable: shorter calls, more patient buyers, higher qualified conversion rates.

What's Ahead

Context-first is a direction, not a destination.

The next step is multimodal handoffs. Voice is powerful, but it's not always the right channel for every moment in a call. Product names can be technical, non-English, easy to mishear. For these moments, Humanoid Vision Agent will soon trigger a WhatsApp confirmation flow mid-call. The buyer types the product name, the call resumes with both sides aligned.

We're also expanding the types of context the agent can consume before a call: seller catalogues, buyer history, category-level signals.

The principle stays the same. The more the agent knows before it speaks, the less the buyer has to explain.

If you're running high-volume AI calls and want your agent to show up informed, not scripted, we'd love to show you what this looks like in practice.